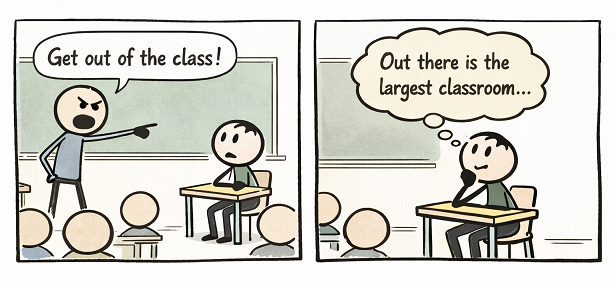

Why real learning begins when classrooms stop defining its boundaries

If learning only happens inside classrooms, then how do entrepreneurs learn from failure, scientists from doubt, or leaders from crisis? The answer is simple: the world is the largest classroom we will ever enter.

For much of history, classrooms were the natural center of learning. Knowledge was scarce, books were limited, and professors served as the primary custodians of expertise. That model worked well for its time. But the context in which education operates today has changed fundamentally.

We now live in an era where intellect and experience are visible everywhere. Business leaders make high-stakes decisions in public view. Entrepreneurs experiment, fail, and adapt openly. Scientists debate ideas and revise conclusions as evidence evolves. Philanthropists confront ethical dilemmas that no textbook can fully anticipate. These lessons unfold daily, accessible to anyone willing to observe and reflect.

This is not an argument against classrooms or formal education. It is an argument against treating classrooms as the boundary of knowledge.

For students, this means recognizing that learning does not end with lectures or exams. Textbooks provide frameworks, but the world teaches consequences. Real understanding develops when students observe how theory collides with reality—how uncertainty is managed, how values influence decisions, and how imperfect humans navigate complex systems.

For professors, this shift elevates rather than diminishes their role. In a world of abundant information, educators are no longer merely transmitters of content. They become guides who help students interpret complexity, connect ideas across disciplines, and develop judgment. The most impactful classrooms are those that encourage curiosity, discussion, and real-world sense-making—not just correct answers.

For academic leaders, this moment presents a strategic opportunity. Students are already learning from the world, whether institutions acknowledge it or not. Universities that remain relevant will be those that intentionally integrate real-world observation into teaching, reward reflective learning, and position classrooms as gateways to lived experience rather than isolated silos of knowledge.

The world teaches lessons no curriculum can fully capture: how power is exercised, how ethics are tested, how innovation emerges from failure, and why judgment matters when rules fall short. These lessons cannot be memorized; they must be noticed, discussed, and reflected upon.

In this context, the classroom becomes a launchpad rather than a destination. Professors become sense-makers rather than gatekeepers. Students become active observers rather than passive recipients.

The most valuable skill education can cultivate today is not mastery of information, but the ability to learn continuously from life itself.

Because in the end, the world is not just outside the classroom.

It is the classroom.